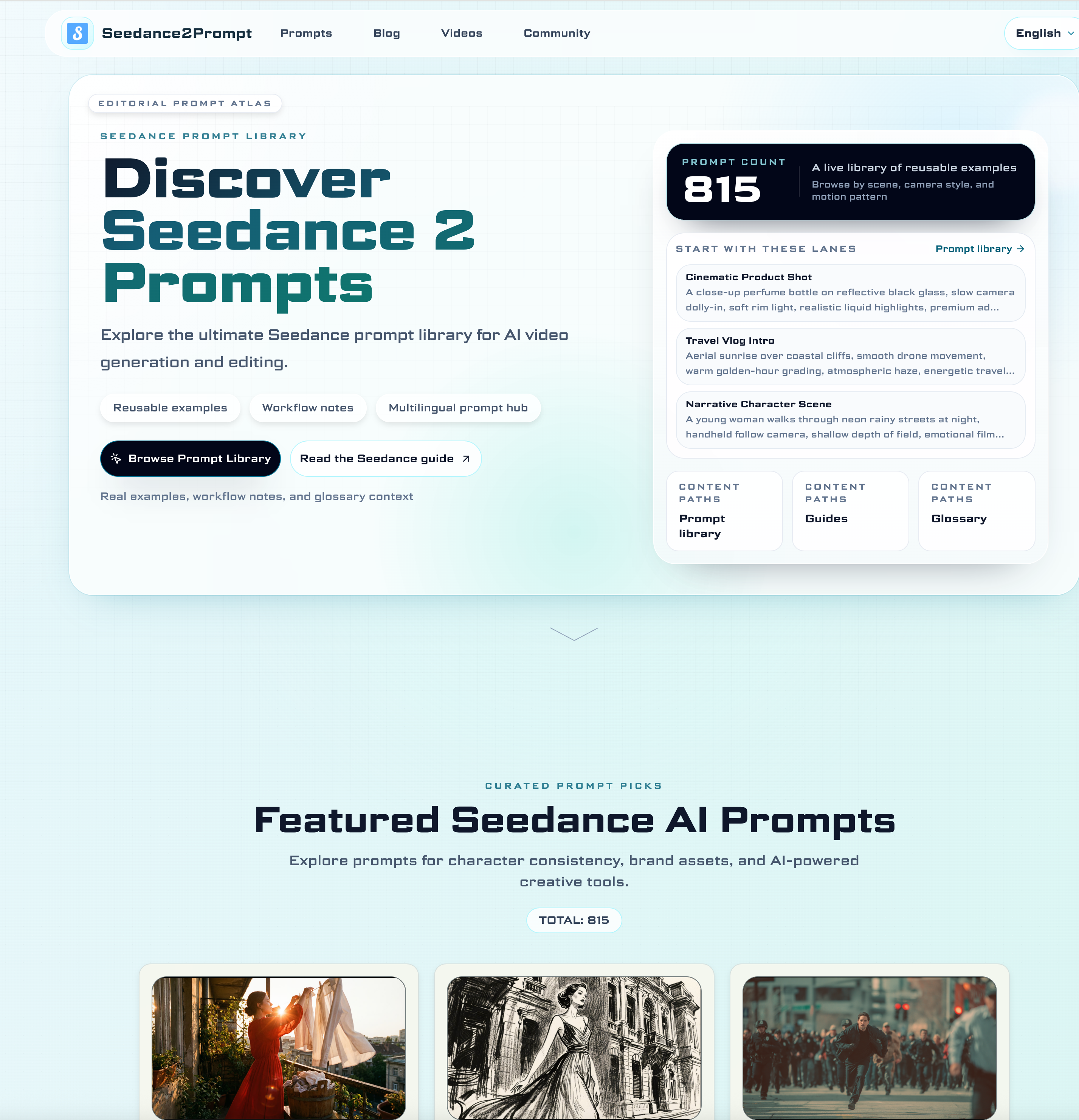

What is Seedance 2.0 and what can I create with it?

Seedance 2.0 is a multimodal text-to-video model focused on controllability and cinematic quality. You can generate short clips from text, combine text with reference images, and refine motion language, camera movement, and scene transitions for social content, ads, and storytelling projects.

How should I write better Seedance prompts?

Use a structured format: subject, scene, camera movement, action, style, and output constraints. Start with concise core instructions, then add details only when needed. In our prompt library, each example is grouped by use case so you can reuse the pattern and swap only the variables.

Where can I learn the complete Seedance workflow?

Start from the Blog tutorial and then move to advanced sections on prompt control, transition design, and quality optimization. We also include video walkthroughs and community case studies so you can quickly copy proven production workflows.

Can beginners use this site effectively?

Yes. The site is designed for both beginners and advanced users. Beginners can follow section-based examples and tutorials, while advanced creators can combine multimodal references, editing-first prompt templates, and rhythm control techniques for more precise outputs.

Seedance 2.0 is an advanced AI tool that is redefining the way creators approach Video Generation, especially for those seeking cinematic quality and precise control. Unlike traditional text-to-video systems that often produce inconsistent or generic outputs, Seedance 2.0 introduces a multimodal framework that allows users to combine text, reference images, and structured prompts to generate highly refined video content. This makes it particularly suitable for a wide range of use cases, including social media clips, advertising creatives, and narrative storytelling projects.

One of the key strengths of this AI tool lies in its emphasis on controllability. Users are not limited to simple prompts—instead, they are encouraged to follow a structured format that includes subject, scene, camera movement, action, style, and output constraints. This approach significantly improves the stability and quality of Video Generation results. By starting with a concise core idea and gradually layering in details, creators can fine-tune everything from motion language to scene transitions, achieving outputs that feel more intentional and cinematic rather than random or AI-generated.

Another major advantage is the availability of a complete learning ecosystem. Seedance 2.0 provides access to blog tutorials, advanced workflow guides, and real-world case studies, making it easier for users to understand not just the “what” but also the “how” behind effective prompt design. These resources are especially valuable for creators who want to replicate professional production workflows, as they demonstrate how to structure prompts for multi-shot sequences, maintain visual consistency, and optimize overall video quality.

Importantly, this AI tool is designed to be accessible to both beginners and experienced users. Beginners can quickly get started by following step-by-step examples and pre-structured prompts, while advanced creators can leverage multimodal references, editing-first prompt strategies, and rhythm control techniques to push the boundaries of Video Generation. This scalability makes Seedance 2.0 a versatile platform that grows with the user’s skill level.

Overall, Seedance 2.0 stands out as a powerful AI tool for modern Video Generation, combining ease of use with professional-grade control. It empowers creators to move beyond basic outputs and produce polished, cinematic videos with consistency, efficiency, and creative freedom.