Choosing the right API

There are multiple APIs for transcribing or generating audio:

APISupported modalitiesStreaming supportRealtime APIAudio and text inputs and outputsAudio streaming in and outChat Completions APIAudio and text inputs and outputsAudio streaming outTranscription APIAudio inputsAudio streaming outSpeech APIText inputs and audio outputsAudio streaming out

General use APIs vs. specialized APIs

The main distinction is general use APIs vs. specialized APIs. With the Realtime and Chat Completions APIs, you can use our latest models' native audio understanding and generation capabilities and combine them with other features like function calling. These APIs can be used for a wide range of use cases, and you can select the model you want to use.

On the other hand, the Transcription, Translation and Speech APIs are specialized to work with specific models and only meant for one purpose.

Talking with a model vs. controlling the script

Another way to select the right API is asking yourself how much control you need. To design conversational interactions, where the model thinks and responds in speech, use the Realtime or Chat Completions API, depending if you need low-latency or not.

You won't know exactly what the model will say ahead of time, as it will generate audio responses directly, but the conversation will feel natural.

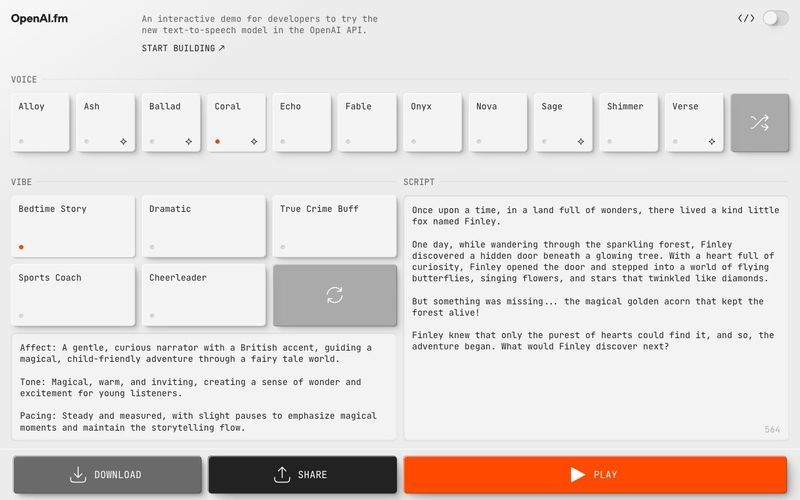

For more control and predictability, you can use the Speech-to-text / LLM / Text-to-speech pattern, so you know exactly what the model will say and can control the response. Please note that with this method, there will be added latency.

This is what the Audio APIs are for: pair an LLM with the audio/transcriptions and audio/speech endpoints to take spoken user input, process and generate a text response, and then convert that to speech that the user can hear.

Recommendations

If you need real-time interactions or transcription, use the Realtime API.

If realtime is not a requirement but you're looking to build a voice agent or an audio-based application that requires features such as function calling, use the

Chat Completions API.

For use cases with one specific purpose, use the Transcription, Translation, or Speech APIs.

Add audio to your existing application

Models such as GPT-4o or GPT-4o mini are natively multimodal, meaning they can understand and generate multiple modalities as input and output.

If you already have a text-based LLM application with the Chat Completions endpoint, you may want to add audio capabilities. For example, if your chat application supports text input, you can add audio input and output—just include audio in the modalities array and use an audio model, like gpt-4o-audio-preview.